What if I told you your credit union is making thousands of deposits every week that never show up on your balance sheet?

Not member deposits. Not capital contributions. Something more valuable — and completely invisible to your board, your examiners, and your strategic plan.

I walked into a credit union’s back office last year and watched a BSA analyst toggle between six separate systems to clear a single alert. She pulled the member’s transaction history from the core, cross-referenced it against a watchlist in a second system, checked the previous alert disposition in a third, drafted a tracker note in a fourth, and documented the whole thing in a fifth. She’d been doing this for 22 years. She was extraordinarily good at it. And she was drowning.

Every time she clears an alert, she’s making a deposit. Every time your lending team reviews an exception, they’re making a deposit. Every time your compliance officer formats a SAR narrative the way your examiner prefers it, every time a member service rep learns that Mrs. Chen prefers email over phone, every time your fraud team identifies a seasonal pattern in ACH returns — deposit, deposit, deposit.

These are deposits of institutional intelligence. And right now, most credit unions are discarding them the moment they happen.

The Banking Metaphor You Already Understand

You know how compounding works. A credit union CEO doesn’t need me to explain the time value of money. So let me use a language you think in every day.

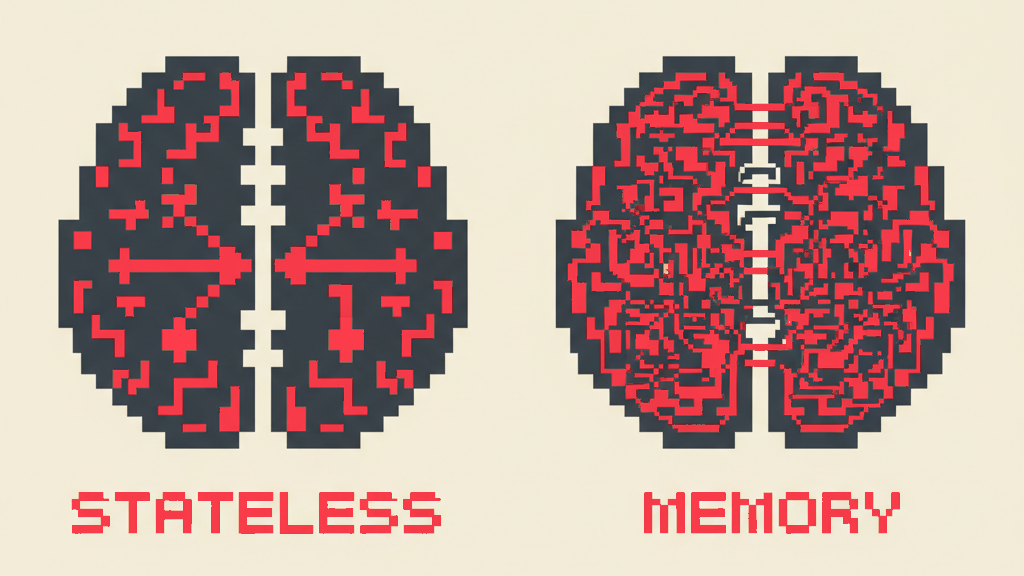

A stateless AI tool — the chatbot, the copilot, the generic assistant — is a checking account with a zero balance. Every interaction is a withdrawal. The system answers a question, processes a request, handles a task. Then it resets. The balance goes back to zero. Tomorrow, it knows nothing about what happened today. You made a deposit that was immediately and automatically withdrawn.

A stateful AI agent — what we call a Runner at Runline — is a high-yield savings account that never allows withdrawals. Every interaction is a deposit. Every deposit earns interest. And the interest compounds.

After one month, the balance is modest. Your BSA Runner has processed 200 alerts and learned which ones are false positives for your specific membership base. Your lending Runner has reviewed 50 applications and started recognizing your institution’s risk appetite on commercial real estate. Your compliance Runner has drafted 15 SAR narratives and noticed that your examiner consistently asks for more detail on the “suspicious activity” section.

After six months, the balance is substantial. I’ve seen this firsthand at a partner credit union near a military base: the BSA Runner now clears 94% of routine alerts autonomously because it knows — from six months of deposits — that the off-base businesses’ weekly cash deposits, the military payroll cycle patterns, and the seasonal PCS spending spikes are all legitimate. The lending Runner flags applications that fall outside historical approval patterns before a human reviews them. The compliance Runner formats every document the way that specific examiner prefers, because it has six months of feedback deposits earning compound interest.

After eighteen months, the balance is transformative. Your Runners don’t just respond to work. They anticipate it. The BSA Runner surfaces a cluster of three members showing simultaneous unusual activity — not because any individual alert triggered, but because eighteen months of pattern recognition identified a correlation your human analysts hadn’t noticed. That’s not a smarter model. That’s compound intelligence.

As one venture capitalist recently put it on X, in a post that went viral for good reason: “Every session a user spends inside a well-architected AI system is a deposit. The system learns their editing patterns, their risk tolerance, their preferences — implicitly, without being told. After six months of daily use, that system knows how you work in ways you couldn’t fully articulate yourself. That’s not a product feature. That’s a compounding asset.”

He was talking about AI startups broadly. But the insight lands even harder in credit unions, where institutional knowledge is literally the competitive advantage — and where the people who hold that knowledge are retiring at a rate of 11,000 per day.

What Gets Deposited

In Article 9, I described five layers of institutional context — from written SOPs down to risk tolerance and institutional values. Each layer represents a different category of deposit. Let me make this concrete.

Operational pattern deposits. At Heartland Credit Union, I watched the BSA team work through their alert queue. Every alert they process teaches the system about their membership’s normal behavior. Maria’s $4,000 Tuesday cash deposits from her flower shop. The construction company’s seasonal revenue cycle. The university town’s August and January disbursement pattern. A generic AI sees each of these as isolated data points. A stateful agent sees them as a growing ledger of institutional truth — each entry making the next judgment more accurate.

Examiner preference deposits. Your examiner flagged weak CTR documentation last cycle. Your compliance officer corrected the Runner’s narrative format three times in the first month. By month four, the Runner produces documentation that anticipates your examiner’s specific expectations — not because someone programmed “Examiner Johnson prefers X” into a rule, but because the accumulated corrections compounded into implicit understanding. I want to pull back the curtain here: this is the kind of knowledge that takes a human analyst years to develop. A stateful agent develops it in months — because it processes every deposit, not just the ones it personally handled.

Member communication deposits. Does your credit union say “Dear Member” or “Hi Sarah”? Does your outbound communication use formal language or conversational tone? Do you sign emails with individual names or “Your Credit Union Team”? A generic AI guesses, or uses a financial services template that sounds like every other institution. A stateful agent that has processed six months of your actual member communications has absorbed your voice. That voice is a deposit — and it compounds every time the agent drafts a communication that your team approves without edits.

Risk tolerance deposits. How aggressively does your credit union pursue indirect auto lending? How conservative is your board on CRE concentration? What’s your appetite for small-dollar consumer loans in a rising rate environment? Every lending decision your team makes — approve, decline, send to committee — is a deposit that teaches the system your institution’s actual risk appetite. Not the policy manual risk appetite. The real one. The one that lives in the judgment calls your experienced lenders make a hundred times a month. At one CUSO I worked with, the SOPs were “sprinkled across people’s computers, tribal knowledge in people’s heads.” The real risk appetite wasn’t written down anywhere. It lived in the lending team’s muscle memory.

Workflow efficiency deposits. Every time your team corrects an agent’s work — “no, we do it this way” — that correction is a deposit. Every approval, every rejection, every escalation path your staff chooses teaches the system how your institution actually operates versus how the policy manual says it should. After enough deposits, the gap between the agent’s first draft and the human’s final version narrows toward zero. That narrowing is compound interest, measured in hours saved per week.

The Withdrawal Problem

Here’s what happens with stateless AI — the chatbot, the copilot, the tool you log into and out of.

Every interaction starts at zero. The system has no memory of yesterday’s work. Your BSA analyst cleared 50 alerts last week using the chatbot, and this week the chatbot starts fresh — same generic thresholds, same textbook assumptions, same inability to recognize Maria’s flower shop deposits. Those 50 interactions were withdrawals against the analyst’s time with zero balance accumulated.

This isn’t a design flaw in most AI products. It’s an architectural choice. As the viral tweet I quoted earlier observed: “The architectural decision that separates these two worlds is simpler than most founders think: stateful vs. stateless agents.” Most vendors chose stateless because it’s simpler to build, cheaper to operate, and easier to scale across thousands of customers. Your data doesn’t persist because persisting it creates complexity — storage costs, privacy requirements, institutional isolation — that vendors don’t want to manage.

I learned this the hard way building Flowroute. When we built telecom infrastructure — the literal pipes that carry voice traffic — we obsessed over state. Every call had context: routing history, quality metrics, failure patterns. The carriers that treated each call as a stateless event couldn’t optimize. The ones that accumulated context across millions of calls built networks that got smarter over time. Infrastructure outlasts products. That principle applies to AI exactly the same way.

The result of stateless AI? You’re paying for a system that makes the same mistakes on day 365 that it made on day one. Every session is a fresh start. Every dollar spent is a withdrawal from your budget with nothing deposited into institutional memory.

The industry spending numbers make this painful. Financial institutions globally spend $23 billion per year on BSA/AML compliance. The false positive rate on transaction monitoring alerts is 95%. If your AI tool resets every session, it cannot learn which of your institution’s alerts are false positives — because it doesn’t remember processing them yesterday. You’re paying for a tool that is structurally incapable of getting better at the thing you need it to do.

The Compounding Curve

Let me put numbers to the metaphor.

Month 1: The opening deposit. Your BSA Runner processes its first 200 alerts. It’s operating in what we call “training wheels” mode — every action reviewed by a human. It’s learning your institution’s patterns, but the human workload hasn’t decreased yet. Think of this as the initial deposit that hasn’t started earning interest. Value delivered: modest. Foundation established: significant.

Month 3: Early interest. The Runner has processed 600-plus alerts. It’s identified that roughly 70% of alerts from Rule 7 (cash transactions near the CTR threshold) are false positives for your membership base — because your community has a high proportion of cash-intensive small businesses. Your BSA analyst now reviews Runner-flagged alerts first, spending time only on the 30% that warrant investigation. Time saved: 8-12 hours per week. The deposits are earning interest.

Month 6: Compound acceleration. The Runner has processed 1,200-plus alerts across a full seasonal cycle. It knows that alert volume spikes every August (back-to-school spending) and December (holiday cash flow) at your institution, and has learned to distinguish seasonal noise from genuine anomalies. It’s drafting SAR narratives in your examiner’s preferred format. Your compliance officer promotes it from training wheels to supervised mode for routine alerts. Time saved: 15-20 hours per week. The interest is compounding.

Month 12: The flywheel. The Runner surfaces a pattern your human analysts hadn’t identified: three members showing coordinated account activity that individually falls below reporting thresholds but collectively suggests structuring. This insight didn’t come from a smarter model. It came from twelve months of accumulated deposits — twelve months of learning which patterns are normal and which are anomalous for your specific institution. Your examiner notices the proactive identification and notes it favorably. The compound interest just produced a dividend. That’s not a demo scenario. That’s a Tuesday.

Month 18: Institutional memory. Your veteran BSA analyst announces her retirement. Twenty-three years of institutional knowledge. In a stateless world, that knowledge walks out the door — the scenario I described in Article 9 as one of the most urgent risks facing credit unions. In a stateful world, eighteen months of her judgment calls, her corrections, her escalation patterns have been deposited into the Runner. The new hire doesn’t start from zero. They start with a running ledger of institutional intelligence. The compounding curve didn’t just save time. It preserved expertise.

The Invisible Asset

Here’s the part that should concern your CFO and intrigue your board.

This compounding institutional intelligence doesn’t appear anywhere on your balance sheet. There’s no line item for “accumulated agent knowledge.” No GAAP category for “institutional context assets.” No way to mark-to-market the eighteen months of operational deposits your Runners have accumulated.

But the operational impact is real and measurable. Fewer hours per alert. Faster loan processing. Higher first-call resolution. Better examination outcomes. Lower compliance costs. These show up in your income statement as expense reductions and in your member satisfaction scores as improved service. The asset is invisible. The returns are not.

At Runline, we documented this as a foundational architectural belief before reading any VC thesis. When I was building Concreit — an SEC-regulated real estate investing platform — I sat across from regulators who had the authority to end my business. Operating under that kind of scrutiny changes how you build. You don’t bolt compliance on at the end. You architect for it from day one. The same principle applies here: institutional intelligence isn’t a feature you add later. It’s the foundation you build on.

This is genuinely new territory. Credit unions have always had intangible assets — member relationships, brand reputation, staff expertise. But those assets were locked inside people’s heads, unmeasurable and unmanageable. Stateful AI agents make institutional intelligence tangible for the first time. You can measure the deposits (interactions processed), track the balance (accumulated context), and observe the interest (performance improvement over time).

The credit unions that recognize this first will build institutional intelligence that compounds for years while their peers keep making withdrawals against stateless tools that never build a balance.

The Board Conversation

If you’re a credit union CEO, bring this question to your next board meeting:

“Is our AI accumulating institutional intelligence — or discarding it?”

Then ask: How many interactions did our AI tools process last quarter? What did those interactions teach the system about our institution? If we switched AI vendors tomorrow, how much institutional knowledge would we lose?

If the answer to the last question is “none, because the system doesn’t remember anything” — you don’t have an AI strategy. You have a subscription to someone else’s intelligence. And every month you spend making deposits into a system that discards them is a month your institutional intelligence balance stays at zero while the compounding clock ticks.

Credit unions understand deposits. They understand interest. They understand the power of time and compounding to turn modest contributions into transformative assets. The same principles apply to institutional intelligence — if, and only if, the architecture is built to accumulate rather than discard.

Every interaction is a deposit. The question is whether your system keeps the balance.

Sean Hsieh is the Founder & CEO of Runline, the secure agentic platform for credit unions. Previously, he co-founded Flowroute (acquired by Intrado, 2018) and Concreit, an SEC-regulated WealthTech platform managing real securities under dual federal regulatory frameworks.

Next in the series: “Switching Costs You Actually Want” — why the credit unions that build institutional intelligence create switching costs that benefit them, not just their vendor — and why that’s the opposite of the core processor trap.