Ask any AI vendor who their AI works for, and they’ll say “you, the customer.” Then ask where the data lives, who controls the model, what happens when you cancel the contract, and whether you can take the intelligence with you.

The silence that follows tells you everything.

I’ve been in enterprise software for 15 years — first at Flowroute, where we built telecom infrastructure that carriers couldn’t differentiate from their own network, then at Concreit, where we built an SEC-regulated platform where the compliance architecture was the product. In both cases, the companies that won weren’t the ones with the best features. They were the ones that became inseparable from how their customers operated.

That’s the distinction I want to draw in this article. Not between AI vendors with different feature sets. Between two fundamentally different models for what AI means inside a credit union: renting intelligence versus owning it.

The SaaS Trap, Revisited

In Article 4 — “The SaaSpocalypse” — I argued that the SaaS model has quietly become a trap for credit unions. You don’t own the software. You don’t own the data model. You don’t own the integrations. When you cancel, you get an export file and a 90-day sunset notice.

AI makes this trap dramatically worse.

Salesforce just launched Agentforce — AI agents for sales, service, and marketing at $2 per conversation. Sounds great until you think about what’s happening underneath. Every customer interaction, every deal pattern, every service resolution that flows through Agentforce trains Salesforce’s models. Your best sales playbooks, your most effective objection handling, your institutional knowledge about what makes your customers tick — it all feeds a system that Salesforce owns and improves for every customer on the platform, including your competitors. When you cancel, you get your contact records. Salesforce keeps the intelligence. This isn’t a Salesforce-specific problem. It’s the SaaS AI model: you pay for capability, the vendor captures the knowledge.

The same pattern plays out across financial services. Voice AI vendors process hundreds of millions of conversations — training shared models that improve for all customers simultaneously. That sounds like a benefit until you realize it means your institutional intelligence subsidizes your competitor’s improvement. Workflow automation vendors call their document processing pipelines “agents” — but the intelligence lives in their cloud, governed by their models, learned from your data.

I’m not criticizing any specific vendor. Interface AI has built something genuinely impressive in the credit union space — over $40 million ARR, 100-plus financial institutions, real cost savings on call center operations. Uptiq has $58 million in funding and the Curql Fund’s distribution network behind genuinely useful lending automation. Both are delivering value. The question isn’t whether these products work. It’s who captures the long-term intelligence they generate.

Here’s the spicy take: most “AI agents” in financial services aren’t agents at all. They’re APIs with better marketing. A real agent accumulates context, exercises judgment, improves from experience, and operates with graduated autonomy under human oversight. Document classification with an LLM is not that. Call deflection is not that. Workflow automation with a chatbot front-end is not that.

The distinction matters because it determines who captures the value. When you deploy a tool, the vendor captures the value. When you deploy an agent that learns your institution, you capture the value. And that value compounds in ways that tool rental never can.

What “Owning Your Intelligence” Actually Means

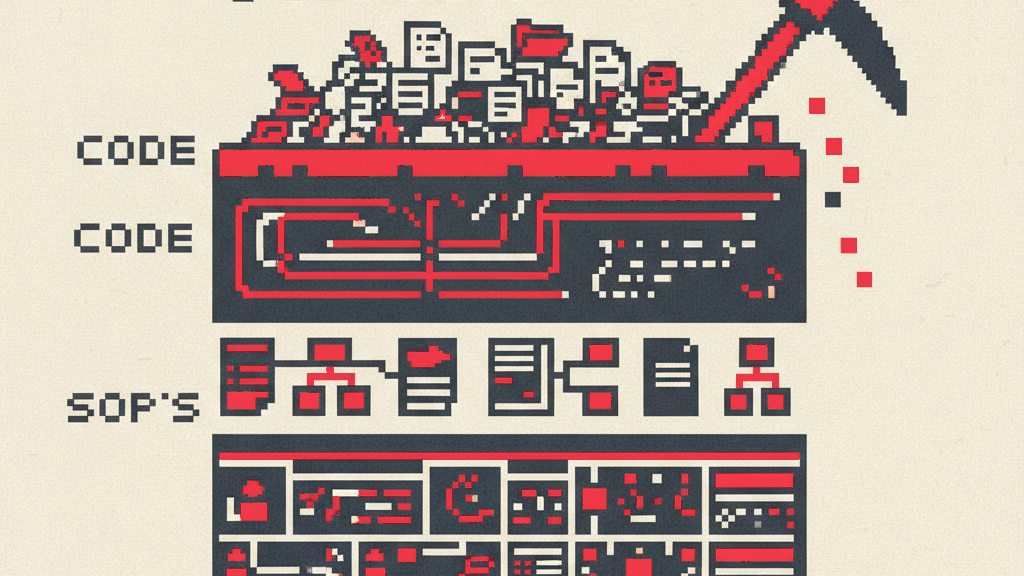

Let me be specific about what I mean, because “you own the AI” sounds like marketing until you can point to architecture.

At Runline, ownership means five things — and I want to walk through each, because this is where the differentiation becomes structural, not rhetorical.

1. Your data layer is physically yours.

Palantir built a $2.8 billion business on a single architectural principle: your data never leaves your infrastructure. When the U.S. Department of Defense deploys Palantir Foundry, the platform runs inside DoD’s own environment. The data stays on government hardware. The intelligence stays under government control. When the NHS deployed Foundry during COVID, patient data never left NHS servers — Palantir provided the orchestration layer, not the data layer. That’s why institutions with the most sensitive data on earth trust Palantir: because Palantir never asks to take the data home.

The same principle should apply to your credit union. Every institution on Runline gets its own data infrastructure. Not a shared database with row-level security. Not a multi-tenant data warehouse with logical separation. Physically separate storage — your ClickHouse instance, your vector indices, your embeddings.

Why does this matter? Because when an examiner asks “where does our member data live and who else can access it?” — the answer is unambiguous. Your data lives in your instance. No other credit union’s queries touch it. No shared model training combines it with anyone else’s. When you cancel Runline, your data infrastructure comes with you — or gets destroyed, your choice. Either way, it’s yours.

I described the six layers of isolation in last week’s multi-core architecture brief: code isolation (per-institution repositories), credential isolation (zero-secret agents behind a proxy), data isolation (physically separate storage), event isolation (organization-scoped audit streams), kill-switch isolation (per-organization enforcement in under 100 milliseconds), and retention isolation (block, drain, purge on contract termination). Each layer is independently auditable. Combined, they create an architecture where your credit union’s intelligence is structurally incapable of leaking to another institution.

Compare this to a shared-model architecture where 100 organizations’ data trains the same neural network. Where does Institution A’s knowledge end and Institution B’s begin? The honest answer is: nobody knows. The embeddings are entangled. The training data is co-mingled. The intelligence is communal. That’s fine for a consumer product. It’s unacceptable for a regulated financial institution.

2. Your operational playbooks are yours.

Every credit union has SOPs — standard operating procedures — but they live in binders, SharePoint folders, and people’s heads. When your veteran BSA officer retires, 25 years of “here’s how we handle this edge case” walks out the door. I wrote about this retirement cliff in Article 16: 4.2 million Americans turned 65 in 2025, and the pace continues through 2027.

Runline encodes your SOPs as Playbooks — declarative specifications that define how a Runner (our term for an AI agent) operates. A BSA Playbook captures your triage logic, your narrative templates, your examiner’s documentation preferences, your institution’s risk thresholds. A lending Playbook encodes your underwriting criteria, your exception policies, your commercial loan review cadence.

These Playbooks are YAML files that your team controls. Not proprietary vendor configurations. Not black-box model weights. Human-readable specifications that your compliance officer can review, your auditor can inspect, and your examiner can validate. When your BSA officer reviews a Runner’s work and corrects a judgment call, that correction feeds back into the Playbook. The institutional knowledge doesn’t just survive — it crystallizes into executable form.

And because Playbooks are declarative and runtime-agnostic, they work with whatever AI infrastructure comes next. Claude today, something else tomorrow. The intelligence encoded in your Playbooks travels with you. You’re not locked into our models or our platform. You’re locked into your own operational knowledge, which is exactly where you should be locked in.

3. Your agents are persistent and learn your institution.

Factory AI — whose Missions system I analyzed in Article 15 — builds ephemeral agents. A Worker Droid spins up, executes a task, and disappears. Fresh context every time. No accumulated knowledge. This works brilliantly for software development, where codebases are version-controlled and context is reconstructable.

It doesn’t work for a BSA officer whose examiner prefers narratives structured a specific way. It doesn’t work for a lending team whose commercial borrowers have relationship histories spanning decades. It doesn’t work for a member services department where knowing that Maria called three times about the same issue — and is getting frustrated — is the difference between retention and attrition.

Runline Runners are persistent. They accumulate institutional context over weeks and months of operation. Your BSA Runner processes 200 alerts in its first month, and by month three, it knows that 94% of alerts from a specific rule are false positives at your institution — because your member demographics trigger that pattern legitimately. By month six, it surfaces insights you didn’t ask for: “Three members showed this pattern simultaneously — historically, this cluster correlates with confirmed fraud.”

That’s not a smarter model. It’s the same model with six months of your institutional context. And that context is stored in your data layer, governed by your Playbooks, and owned by your institution. If you switched AI platforms tomorrow, you’d take that accumulated intelligence with you.

As one founder of an ephemeral-agent company put it publicly: “The system doesn’t remember that last week’s Financial Advisor was brilliant.” We think that memory is the moat. Persistence isn’t a feature. It’s the compounding engine.

4. Trust is graduated and staff-controlled.

I described our four trust tiers in Article 12 — training wheels, supervised, semi-autonomous, autonomous — and the progression criteria: 90% success rate over 20-plus tasks, zero security incidents, consistent escalation adherence.

But what I didn’t emphasize enough is who controls the progression. Your staff does. Not Runline. Not an algorithm. Your BSA officer decides when the BSA Runner moves from drafting SARs for review to filing routine SARs autonomously. Your lending manager decides when the Lending Runner moves from pre-screening to full underwriting preparation. Your HR coordinator decides when the HR Runner handles benefits inquiries without supervision.

This matters because it means the agents become extensions of your team’s judgment. The BSA officer who promoted her Runner from training wheels to supervised after 30 days — she trusts that agent the way she trusts a trained junior analyst, because she personally verified the work quality. The agent’s autonomy reflects her standards, not a vendor’s confidence score.

Compare this to a SaaS product where the vendor decides what the AI can and can’t do. “Our AI can now handle card disputes autonomously!” Great — but your compliance officer didn’t validate that capability against your risk profile. You’re trusting the vendor’s judgment about when the AI is ready. That’s a fundamentally different relationship.

5. The control plane is examiner-ready by design.

Every action every Runner takes flows through the Grid — our control plane. Authentication, rate limiting, kill-switch enforcement, and complete audit logging. I described this architecture in Article 14: the Grid isn’t overhead. It’s the infrastructure that makes examiners comfortable and staff confident.

But here’s what makes ownership structural: the audit trail belongs to your institution. Every Runner action, every decision, every escalation, every human override — logged in your infrastructure, queryable by your compliance team, presentable to your examiner. Not stored in a vendor’s multi-tenant log aggregator. Not accessible only through the vendor’s dashboard. Yours.

When the NCUA asks “show me the audit trail for every autonomous action your AI took last quarter,” you don’t call your vendor and request a report. You run the query yourself. That’s ownership.

The Compounding Flywheel

These five pieces — physical data isolation, executable Playbooks, persistent agents, staff-controlled trust, and owned audit infrastructure — create a flywheel that accelerates over time.

Month 1-3: Runners operate in training wheels. Staff review every action. The data layer begins populating with normalized core processor data. Playbooks capture initial SOPs. High human involvement, low autonomy.

Month 4-6: Staff promote Runners to supervised mode for routine tasks. The agents start surfacing patterns staff didn’t ask for. Playbooks get refined from edge cases. The data layer has enough history for trend analysis. Staff trust increases measurably.

Month 7-12: Select Runners reach semi-autonomous for validated workflows. BSA Runners draft and file routine SARs with spot-check review. Lending Runners pre-screen and prepare applications end-to-end. The institutional context layer is now rich enough that the agents perform meaningfully better than any fresh deployment could. New staff members get onboarded faster because the Playbooks encode what used to live in veterans’ heads.

Year 2: The credit union operates at a fundamentally different capacity. Not because they added staff, but because their existing staff each manage a team of AI agents that embody the institution’s accumulated expertise. The 50-person credit union operates at 200-person capability — the thesis from Article 12, realized through ownership rather than rental.

Here’s the part that should keep competing vendors up at night: by Month 12, the switching cost isn’t contract lock-in or proprietary format lock-in. It’s value lock-in. The credit union’s Runners have accumulated a year of institutional context. Their Playbooks encode a year of operational refinement. Their staff have built trust relationships with agents they personally promoted through autonomy tiers. Walking away from that isn’t like canceling a SaaS subscription. It’s like firing a trained team and starting over with temps.

That’s not a trap. That’s earned value. The credit union wants to stay — not because they can’t leave, but because leaving means abandoning intelligence they built. And critically, if they do leave, they take the Playbooks, the data, and the institutional knowledge with them. The lock-in is to their own expertise, not to our platform.

The Historical Parallel: Staffing Agencies vs. Your Own Team

I keep coming back to a simple analogy.

Renting AI from a SaaS vendor is like using a staffing agency. The workers show up, they do the job, they leave. You don’t invest in their development. They don’t learn your culture. When the contract ends, they walk away with everything they learned — and the staffing agency sends them to your competitor.

Building AI agents that become yours is like hiring and developing your own team. The investment is higher upfront. The ramp-up takes longer. But after a year, those people know your institution in ways no temp ever could. They anticipate problems before they surface. They make judgment calls informed by institutional context. They train the next generation. And if they leave, you have the documentation, the processes, and the institutional knowledge they helped create.

The credit union movement was built on this principle. Not outsourced labor. Not rented capability. A dedicated team — volunteers, staff, members — investing in an institution they own. AI should work the same way.

The Ownership Spectrum: Lessons from Other Regulated Industries

This isn’t a credit union problem. It’s a pattern that plays out across every industry where institutional knowledge is the competitive asset.

Healthcare learned this lesson the hard way — and got it right. Epic Systems dominates hospital IT not because their software is the prettiest (it’s famously not), but because Epic deployments become inseparable from how hospitals operate. Clinical workflows, physician order sets, documentation templates, patient flow protocols — all encoded in Epic’s system, all customized to each hospital’s specific practices. The switching cost is enormous, but it’s not vendor lock-in in the traditional sense. It’s value lock-in. Hospitals that spent years encoding their institutional knowledge into Epic aren’t trapped — they’re invested. The intelligence is theirs. The workflows are theirs. Epic is the infrastructure, not the brain.

That’s the model. Not Salesforce, where the vendor captures the intelligence. Not a generic SaaS tool where cancellation means starting over. An infrastructure that embeds so deeply into institutional operations that it becomes indistinguishable from how the organization works — while keeping the institutional knowledge under the organization’s control.

The defense sector validates the same pattern. I mentioned Palantir earlier — $2.8 billion in revenue built on “your data never leaves.” The Pentagon doesn’t use Palantir because Palantir has the best dashboards. They use Palantir because Foundry runs inside their own infrastructure, integrates with their own data sources, and produces intelligence that belongs to them. When a defense analyst builds a workflow in Foundry, that workflow reflects their operational doctrine, not Palantir’s product roadmap.

The Bloomberg Terminal tells the same story from finance. Traders can’t operate without Bloomberg — not because of a contract, but because 30 years of workflow muscle memory, custom analytics, and institutional communication patterns are embedded in the terminal. Bloomberg doesn’t own the trader’s strategies. The trader’s strategies are inseparable from Bloomberg’s infrastructure. That’s earned lock-in. That’s value compounding in the customer’s favor.

Now look at credit union AI through this lens. The question isn’t “which AI vendor has the best features?” It’s “which AI architecture lets your institution build intelligence that compounds in your favor — the way Epic compounds for hospitals, Bloomberg compounds for traders, and Palantir compounds for defense analysts?”

Most AI vendors in our space are building the Salesforce model: centralized intelligence, shared models, vendor-captured value. That’s not wrong — it’s a valid business strategy that delivers real short-term value. But it’s not what credit unions need for the long term. Credit unions need the Epic model: infrastructure that embeds, intelligence that compounds, and ownership that stays with the institution.

The Core + Intelligence Partnership

If you’re a credit union CEO, your core processor is the backbone of your institution. Symitar, DNA, GOLD, Velocity, Sharetec, Corelation — these systems hold decades of member history, process millions of transactions, and keep your institution running 24/7. That’s not trivial. That’s foundational.

But here’s what I’ve noticed talking to credit union leaders over the past year: most of that foundation is underutilized. Your core holds 20 to 30 years of member data — every transaction, every loan, every interaction — and 90% of it sits dormant in batch reports and month-end extracts. The core is doing its job. The question is: what else could that data do if it had an intelligence layer working alongside it?

We’ve gone through three eras of credit union technology. In the Core Era (1970s-2010s), the core was everything — data, logic, reporting, the whole stack. In the Digital Banking Era (2010s-2025), platforms like Banno, Alkami, and Q2 created a presentation layer on top of the core. Members got mobile apps, but the core still powered the engine underneath.

We’re entering the Interaction Era — and this is where the core becomes more valuable, not less. The interaction layer doesn’t replace the core. It unlocks it. It takes the decades of member data your core has faithfully stored and turns it into real-time institutional intelligence — the kind that drives proactive member service, automated compliance, and operational capacity your staff couldn’t achieve alone.

Think about it from Maria’s perspective — the member I described in Article 12. Your core knows Maria’s balance, her loan terms, her transaction history. The interaction layer, fed by real-time data from that core, knows she got a raise last month, that her car loan is 18 months from payoff, and that her spending pattern suggests she should move money before Friday. The core provides the truth. The interaction layer provides the insight. Neither works without the other.

This is why the AI-native credit union isn’t a credit union that replaced its core. It’s a credit union that deeply integrated its core with an intelligence layer — CDC pipelines that stream core data in real time, Runners that execute operations against the core’s APIs, Playbooks that encode business logic informed by the core’s transaction history.

The cores that lean into this partnership win.

Here’s a way to think about it: we’re heading toward a future where AI agents are the primary consumers of your core’s services. Today, humans navigate screens — so core vendors invest in UX design, dashboards, and member-facing portals. Tomorrow, agents navigate APIs. In an agent-driven world, your API is your UX. A well-designed, real-time API — structured action endpoints, event streams, webhook integrations — is the equivalent of beautiful product design. It’s what makes agents want to work with your core, what makes integrations seamless, what makes the intelligence layer sing.

A core processor with a great API surface becomes dramatically more valuable to its credit unions in the Interaction Era. The core that makes it easy for AI agents to read member context, execute transactions with approval gates, and subscribe to real-time events isn’t being commoditized. It’s being elevated. It becomes the essential foundation of an AI-native institution — the foundation that agents build on, the way iOS became the foundation that millions of apps built on.

This is why we’re excited about partnerships with forward-thinking core providers. The conversation isn’t “core versus intelligence layer.” It’s “core plus intelligence layer” — a combination that makes credit unions operationally capable in ways that neither layer delivers alone. The cores that invest in agent-ready APIs aren’t just keeping up. They’re positioning themselves as the platform that powers the next era of credit union operations.

Practically, this means:

A credit union on any modern core can deploy the interaction layer today, with CDC pipelines that normalize core data in real time. The Playbooks, the institutional context, the Runners’ accumulated knowledge — all of it is built on top of the core’s foundation. The deeper the core integration, the smarter the Runners become, the more value the credit union extracts from the data their core has been faithfully storing for decades.

Sixty-nine percent of credit unions plan to stay on their current core. That’s not inertia — it’s pragmatism. The core works. What’s missing isn’t a different core. It’s the intelligence layer that makes the current core dramatically more capable. The credit unions that pair a strong core foundation with an owned intelligence layer will operate at a fundamentally different level — and their core partners will be integral to making that happen.

Why This Is Hard to Replicate

A competitor reading this might think: “Fine, we’ll add data isolation and Playbooks.” But the moat isn’t any single feature. It’s the integration of all five ownership layers — and the months of institutional context that accumulate within them.

To replicate Runline’s approach, a competitor would need:

Deep core processor partnerships across Symitar, GOLD, DNA, Velocity, Sharetec, Corelation — with CDC (Change Data Capture) pipelines that normalize each core’s data model into a unified schema the intelligence layer can consume. That’s six to twelve months of joint engineering per core processor, built on trust and close technical collaboration with each core provider. We detailed this architecture in the multi-core isolation brief.

Regulatory domain expertise that produces examiner-ready infrastructure from day one — not compliance bolted on after product-market fit. Our Grid control plane, our kill-switch architecture, our approval gate system — these were designed for NCUA examination, not adapted for it.

An operational deployment model that produces the compounding flywheel — persistent agents, trust progression, Playbook refinement, staff-controlled autonomy. This requires a fundamentally different product architecture than SaaS workflow automation. You can’t bolt “institutional learning” onto a stateless API.

Time. A credit union that’s been running Runline for 12 months has 12 months of accumulated institutional context that no fresh deployment can match. The first-mover advantage isn’t about features. It’s about intelligence accumulation. Every month a competitor delays building this architecture is a month their customers’ credit unions fall further behind on the compounding curve.

The Board Question

If you’re a credit union CEO reading this, here’s the question I’d bring to your next board meeting:

“When we deploy AI, who captures the intelligence — us or the vendor?”

Then ask four follow-up questions:

-

Where does our data live? If the answer involves “multi-tenant” or “shared infrastructure,” your institutional data is co-mingled with other institutions’. Ask what happens to learned patterns when you cancel.

-

Who controls what the AI can do? If the vendor decides when the AI handles new task categories, your staff isn’t in control. Ask whether your compliance officer can independently adjust autonomy levels.

-

What do we keep if we leave? If the answer is “an export file,” you’re renting. If the answer is “your Playbooks, your data layer, and your institutional context,” you’re building.

-

Does the AI get better at our institution specifically, or at all institutions generically? Generic improvement is nice. Institution-specific improvement is a moat.

The credit unions that ask these questions now — while the market is still forming and the switching costs are low — will build institutional intelligence that compounds for years. The ones that sign SaaS contracts and worry about ownership later will be renting capability at ever-increasing prices, with nothing to show for it when the contract ends.

The Mission Alignment

I’ll close with this.

Credit unions exist because a group of people decided that financial services should serve communities, not extract from them. The cooperative model — member-owned, member-governed, member-benefiting — is a 180-year rejection of the idea that financial infrastructure should be controlled by entities whose interests diverge from the people they serve.

AI should follow the same principle.

Your intelligence should serve your members, not train your vendor’s model. Your operational knowledge should compound in your institution, not in a SaaS provider’s multi-tenant cloud. Your staff should control your agents’ behavior, not accept a vendor’s judgment about what the AI should be trusted to do.

When your BSA Runner drafts a SAR narrative and your compliance officer refines it, that refinement should make your Runner better — not every agent on the vendor’s platform. When your lending team discovers an edge case and updates the Playbook, that institutional learning should be yours — not shared with the credit union across town that happens to use the same vendor.

Epic understood this in healthcare. Palantir understood this in defense. Bloomberg understood this in capital markets. The institutions that thrive in regulated industries don’t rent intelligence from vendors. They build intelligence on infrastructure they own.

Credit unions deserve the same.

Agents that become your agents. Intelligence that becomes your intelligence. A workforce that becomes your workforce — governed by your staff, trained on your data, learning your institution, improving every month.

Your agents. Not ours. That’s the point.

Sean Hsieh is the Founder & CEO of Runline, the secure agentic platform for credit unions. Previously, he co-founded Flowroute (acquired by Intrado, 2018) and Concreit, an SEC-regulated WealthTech platform managing real securities under dual federal regulatory frameworks.

Next in the series: “The Regulator as Ally: How NCUA’s AI Guidance Is the Best Design Spec You’ll Ever Get” — why the institutions that read NCUA letters as product requirements, not compliance burdens, will build the AI infrastructure that wins.